The era of one-size-fits-all AI is over. In 2025 and beyond, forward-thinking enterprises are making a decisive shift—from relying on generic tools like ChatGPT to building their own custom GPTs (Generative Pre-trained Transformers) tailored to their unique business needs. From customer service automation and internal knowledge management to marketing intelligence and code generation, enterprise-grade custom GPTs are becoming a strategic necessity, not just a tech trend.

According to McKinsey’s State of AI Report, generative AI is poised to add $4.4 trillion annually to the global economy. Meanwhile, Gartner predicts that by 2026, more than 80% of enterprises will have used generative AI APIs or deployed GenAI-enabled applications—up from less than 5% in 2023. The message is clear: enterprises that don’t build their own AI capabilities risk falling behind.

But why are companies investing millions in building custom GPTs when powerful off-the-shelf solutions already exist? And how are they doing it? In this comprehensive guide, we’ll explore the driving forces behind the enterprise GPT movement, real-world use cases, the technology stack powering custom GPTs, and how your organization can get started.

The Enterprise GPT Movement: Why Now?

Several converging factors are driving enterprises to build their own GPTs rather than relying solely on third-party AI services. Understanding these drivers is essential for any business leader evaluating their AI strategy.

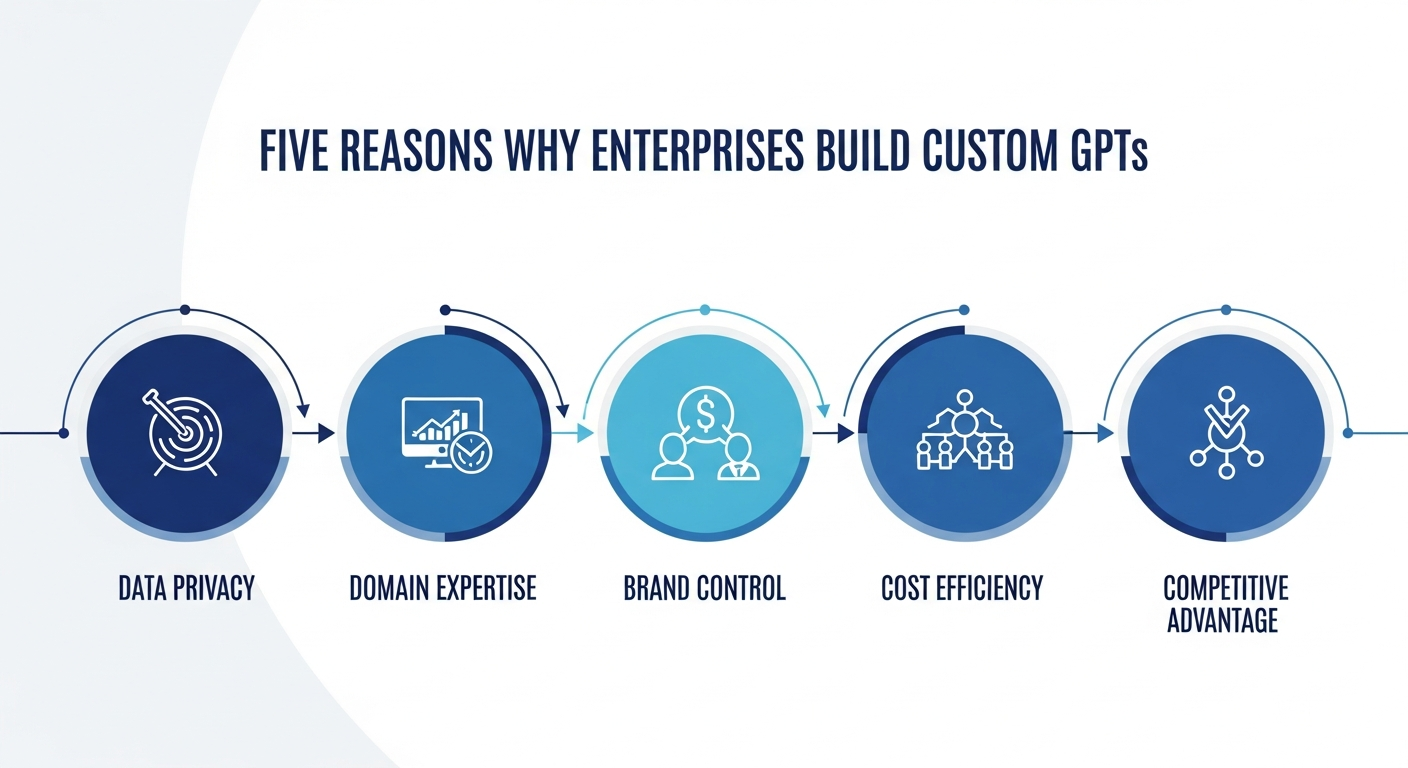

1. Data Privacy and Regulatory Compliance

Public GPTs like ChatGPT and Claude are powerful, but they come with inherent risks that enterprise CISOs and compliance officers cannot ignore:

- Data Leakage Risk: Sensitive or proprietary information could be inadvertently used in model training or exposed through external API calls

- Regulatory Challenges: Industries like finance, healthcare, and defense face GDPR, HIPAA, SOC 2, and CCPA compliance requirements that demand strict data sovereignty

- Intellectual Property Concerns: Trade secrets, proprietary algorithms, and confidential business strategies require air-gapped AI environments

- Audit Trail Requirements: Many regulations require complete logging of AI interactions, which is difficult with third-party services

A survey by Cisco’s Data Privacy Benchmark Study 2024 revealed that 96% of companies believe privacy and data sovereignty are critical in AI adoption. This alone is pushing enterprises toward self-hosted, custom GPT solutions.

2. Need for Domain-Specific Intelligence

Generic GPTs are trained on the internet—a vast but unfocused dataset. Enterprises need AI that understands their specific context:

- Internal Knowledge: Product manuals, SOPs, HR policies, and technical documentation

- Industry Terminology: Healthcare codes, legal precedents, financial regulations, and engineering standards

- Business Context: CRM data, ERP workflows, CMMS maintenance records, and customer interaction history

- Proprietary Data: Research findings, competitive intelligence, and strategic planning documents

Custom GPTs excel at providing contextual, accurate responses when fine-tuned on proprietary datasets. According to research published in arXiv, domain-specific fine-tuning combined with RAG (Retrieval Augmented Generation) can reduce AI hallucinations by up to 70% compared to generic models. This translates directly to:

- Higher accuracy in customer-facing responses

- Fewer costly AI errors and hallucinations

- Better user experience and employee adoption

- Faster time-to-value for AI investments

3. Control, Customization, and Brand Alignment

Enterprises need AI that represents their brand and operates within their defined boundaries:

- Tone of Voice: Friendly, technical, formal, or industry-specific communication styles

- Functional Control: Custom workflows, role-based permissions, and multi-step reasoning chains

- Brand Safety: Responses aligned with corporate values, avoiding controversial or off-brand content

- Integration Depth: Deep connections to internal tools, databases, and business processes

This level of control is simply impossible with generic, off-the-shelf AI solutions.

4. Cost Efficiency at Scale

While building custom GPTs requires upfront investment, the long-term economics are compelling. Fine-tuning a smaller, open-source model (like LLaMA 3, Falcon, or Mistral) or using embedding-based RAG often proves 60-80% more cost-effective than relying entirely on hosted API calls at scale.

Consider this comparison:

- API-based approach: $0.03-$0.06 per 1K tokens with OpenAI GPT-4 — at enterprise scale (millions of queries/month), this adds up to $50,000-$200,000/month

- Self-hosted approach: One-time infrastructure cost + smaller per-query costs, typically reducing ongoing expenses by 60-80% after the first year

5. Competitive Advantage Through AI Differentiation

When every competitor uses the same ChatGPT API, there’s no differentiation. According to Harvard Business Review, companies that build proprietary AI capabilities are 3x more likely to achieve sustainable competitive advantages than those relying solely on third-party AI services.

The 5 key drivers behind the enterprise custom GPT movement

Real-World Use Cases: How Enterprises Are Using Custom GPTs

The applications of enterprise custom GPTs span virtually every business function. Here are the most impactful use cases driving adoption:

Customer Support and Service Automation

The Opportunity: Custom GPTs trained on your product documentation, support tickets, and customer interaction history can resolve 70-85% of Tier 1 support queries without human intervention.

- Automated ticket classification and routing

- Contextual response generation based on customer history

- Multi-language support with brand-consistent messaging

- Seamless escalation to human agents when needed

Example: A leading SaaS company deployed a custom GPT for customer support, reducing average resolution time from 4 hours to 12 minutes while maintaining a 94% customer satisfaction score.

Internal Knowledge Management

Enterprises are using custom GPTs to democratize access to institutional knowledge:

- Employee Onboarding: AI assistants that answer new hire questions about policies, procedures, and tools

- IT Helpdesk: Automated troubleshooting guides and resolution steps

- Legal and Compliance: Instant access to regulatory requirements and company policies

- Engineering Teams: Code documentation search, architecture decision records, and best practice guides

Marketing and Content Intelligence

Custom GPTs trained on brand guidelines, past campaigns, and market research enable:

- Brand-consistent content generation at scale

- Market analysis and competitive intelligence reports

- SEO-optimized content creation aligned with business goals

- Personalized marketing copy based on customer segments

Code Generation and Development Acceleration

Engineering teams are building custom GPTs fine-tuned on their codebase, coding standards, and architecture patterns. These internal AI coding assistants understand:

- Company-specific coding conventions and patterns

- Internal APIs and library documentation

- Security requirements and compliance rules

- Legacy system integration patterns

According to GitHub’s enterprise research, custom AI coding assistants can boost developer productivity by 35-55% when trained on organization-specific code.

The Technology Stack Behind Enterprise GPTs

Building a custom enterprise GPT involves several key technology layers. Understanding this stack helps business leaders make informed decisions about their AI investment:

Foundation Models

Enterprises have several options for their base model:

- Open-Source Models: Meta’s LLaMA 3, Mistral, Falcon, and DeepSeek offer full control and no licensing fees

- Commercial Models: OpenAI’s GPT-4, Anthropic’s Claude, and Google’s Gemini provide cutting-edge capabilities with API access

- Hybrid Approach: Using commercial APIs for complex tasks while running open-source models for high-volume, routine operations

Fine-Tuning and RAG Architecture

Two primary approaches for customization:

- Fine-Tuning: Retraining the model on your proprietary data to permanently embed domain knowledge. Best for: consistent, specialized responses across a narrow domain

- RAG (Retrieval Augmented Generation): Connecting the model to your knowledge base at query time. Best for: dynamic, up-to-date information retrieval

- Hybrid RAG + Fine-Tuning: Combining both approaches for maximum accuracy and flexibility—the approach most enterprises adopt

Infrastructure and Deployment

Enterprise GPTs require robust infrastructure:

- GPU Compute: NVIDIA A100/H100 GPUs for model training and inference

- Vector Databases: Pinecone, Weaviate, or Milvus for efficient similarity search

- Orchestration: LangChain, LlamaIndex, or custom frameworks for managing AI workflows

- Monitoring: LLM observability tools for tracking performance, costs, and safety

The four-layer technology stack powering enterprise GPT solutions

Building Your Enterprise GPT: A Step-by-Step Approach

Successfully building an enterprise GPT requires a methodical approach. Here’s the proven framework:

Phase 1: Strategy and Use Case Definition (Weeks 1-2)

- Identify high-impact use cases with clear ROI potential

- Assess data readiness and quality

- Define success metrics and KPIs

- Evaluate build vs. buy options

- Establish governance and compliance framework

Phase 2: Data Preparation and Architecture (Weeks 3-4)

- Audit and clean proprietary datasets

- Design the RAG pipeline and vector database schema

- Set up secure development infrastructure

- Implement data governance and access controls

Phase 3: Model Development and Fine-Tuning (Weeks 5-8)

- Select and configure the foundation model

- Fine-tune on domain-specific data

- Build the RAG retrieval pipeline

- Implement guardrails and safety measures

- Test for accuracy, bias, and hallucinations

Phase 4: Integration and Deployment (Weeks 9-12)

- Integrate with existing business systems (CRM, ERP, etc.)

- Deploy to production with monitoring

- Set up feedback loops for continuous improvement

- Train end users and gather adoption metrics

Common Challenges and How to Overcome Them

Building enterprise GPTs isn’t without challenges. Here’s how successful organizations navigate the most common obstacles:

Data Quality Issues

The Problem: Garbage in, garbage out. Poor data quality leads to unreliable AI outputs.

The Solution: Invest in data cleaning, standardization, and governance before model training. According to Gartner, organizations that invest in data quality see 3x better AI outcomes than those that skip this step.

Hallucination Management

The Problem: AI models occasionally generate confident but incorrect responses.

The Solution: Implement RAG with citation tracking, confidence scoring, and human-in-the-loop verification for critical decisions. Use guardrails to flag low-confidence responses.

Change Management and Adoption

The Problem: Employees resist new AI tools, leading to low adoption rates.

The Solution: Start with high-impact, easy-win use cases that demonstrate clear value. Provide training and create AI champions within each department.

Cost and Resource Planning

The Problem: Enterprise GPT projects can spiral in cost without proper planning.

The Solution: Start with a focused pilot project, prove ROI, then scale. Use experienced AI development partners to avoid costly mistakes and accelerate time-to-value.

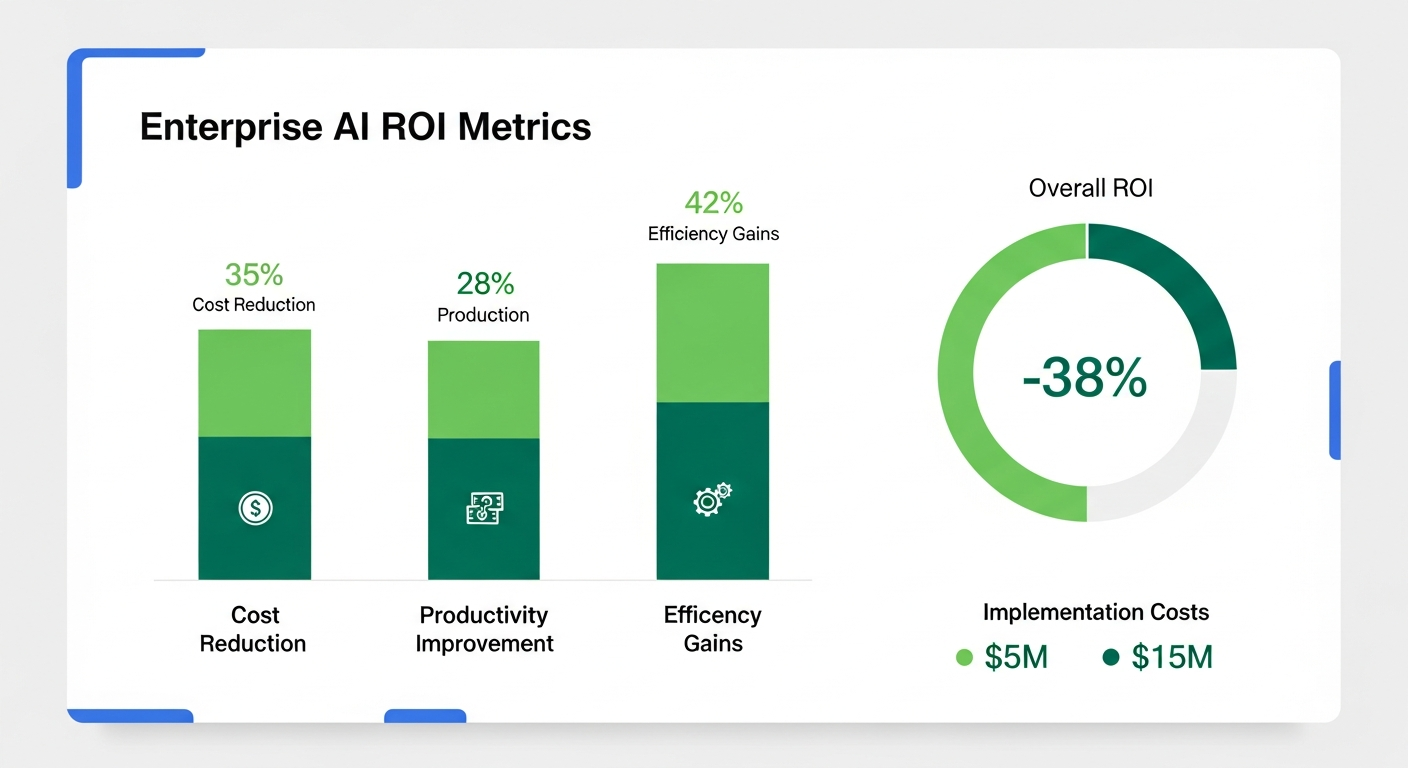

The ROI of Enterprise Custom GPTs

The business case for custom enterprise GPTs is compelling when you look at the numbers:

The measurable ROI of enterprise custom GPT implementations

- Customer Support: 40-60% reduction in support costs through automated resolution

- Employee Productivity: 25-35% improvement in knowledge worker productivity

- Developer Efficiency: 35-55% faster code development with custom AI assistants

- Content Creation: 10x faster content production while maintaining brand consistency

- Decision Making: 50% faster insights from unstructured data analysis

McKinsey estimates that enterprises fully leveraging generative AI see an average ROI of 200-400% within the first 18 months of deployment.

Enterprise GPT Security: What You Need to Know

Security is non-negotiable for enterprise AI. Here are the essential security measures:

- Data Encryption: End-to-end encryption for data at rest and in transit

- Access Controls: Role-based access with multi-factor authentication

- Prompt Injection Protection: Guardrails against adversarial inputs

- Output Filtering: Automated content moderation and PII detection

- Audit Logging: Complete interaction logs for compliance and forensics

- Model Isolation: Air-gapped environments for sensitive deployments

Conclusion: The Future Belongs to AI-Empowered Enterprises

The question is no longer whether enterprises should build custom GPTs—it’s how fast they can do it. With data privacy requirements tightening, the need for domain-specific intelligence growing, and the economics increasingly favoring self-hosted solutions, the enterprise GPT movement is accelerating at an unprecedented pace.

Organizations that invest in custom GPT capabilities today will have a significant competitive advantage tomorrow. Those that wait risk falling behind as their competitors use AI to automate operations, delight customers, and make smarter decisions.

The future of enterprise AI isn’t about using someone else’s model. It’s about building your own.

About DreamzTech: We’re a leading Generative AI development company specializing in custom GPT solutions, LLM development, and enterprise AI strategy. Our team combines deep technical expertise with practical business acumen to deliver AI systems that create measurable value.

Ready to build your enterprise GPT? Contact us today for a no-obligation consultation about your AI project.

Related reading: How AI Is Transforming Custom Software Development in 2026 — our comprehensive guide to AI-augmented development.