Dreamztech is an AWS Partner, Google Cloud Partner and Microsoft Solutions Partner with engineers certified across AWS Solutions Architect, Google Cloud Architect and Azure Solutions Architect Expert; AWS / Microsoft / Google ML and Security specialty credentials; and 100+ AI agent implementations across 15 countries since 2012.

Foundation-model LLMs reason and plan. LangChain, LangGraph, AutoGen and CrewAI orchestrate. Vector databases (Pinecone, Weaviate, OpenSearch, pgvector) supply long-term memory. Each is powerful — but a production-ready AI agent system needs more: agent design, planner/executor architecture, tool inventories, function-call schemas, constitutional guardrails, observability, human-in-the-loop fallback and tight integration with your CRM, ERP, EHR or back-office.

That is what we build, on AWS, Azure or Google Cloud — composed with serverless functions, message buses, vector search, agent orchestration frameworks and API gateways into a HIPAA-eligible, SOC 2 Type II, ISO 27001-aligned production AI agent platform.

Quick Answer: An AI agent is a software system that uses a foundation-model LLM (GPT-4o, Claude 3.5 Sonnet, Llama 3.3, Gemini 2.0) to perceive context, reason over goals, call external tools and APIs, and take actions on behalf of a user — with memory and learning. An AI agent development company designs custom agents and multi-agent AI systems and integrates them into enterprise workflows.

DreamzTech builds custom AI agents from $25,000 (single-task tool-using agent on LangChain) up to $400,000+ (production multi-agent system on LangGraph + CrewAI with custom fine-tuning, agentic RAG and full CRM/ERP integration) — HIPAA-eligible, SOC 2 Type II, ISO 27001 / 27018 and FedRAMP-aligned on AWS, Azure and Google Cloud.

Reviewed by the DreamzTech AI Practice — Reviewed and updated 2026-05-07. Includes hands-on guidance from senior AI agent engineers, certified AWS / Microsoft / Google Cloud architects, and 100+ production AI deployments across 15 countries.

Six tightly-scoped AI agent development service tracks — agent strategy and architecture, custom LLM agent development, multi-agent system orchestration, AI workflow automation, CRM/ERP integration, and managed agent operations. Engage one track or the full end-to-end build on AWS, Azure or Google Cloud.

Use-case discovery, agent vs RPA vs chatbot fit assessment, planner/executor architecture, model and framework selection (LangChain vs LangGraph vs AutoGen vs CrewAI), guardrail and observability roadmap.

Single-task and multi-step tool-using agents built on LangChain, LangGraph, AutoGen and Anthropic Claude tool-use — with function calling, agentic RAG, custom guardrails and your domain-specific prompts.

Planner-executor patterns, agent-to-agent communication, role-based crews (researcher, planner, executor, reviewer) and hierarchical supervisor-worker topologies via CrewAI, AutoGen Studio, LangGraph and Anthropic Multi-Agent.

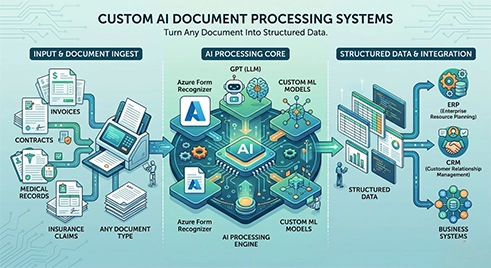

Automate cross-system business workflows — ticket triage, invoice processing, lead qualification, contract review — with AI agents that integrate natively into Salesforce, ServiceNow, SAP, Microsoft 365 and 50+ enterprise systems.

Native AI agent integration with Salesforce, HubSpot, Microsoft Dynamics 365, SAP, Oracle, NetSuite, Workday and ServiceNow via REST, GraphQL, Model Context Protocol and webhook patterns.

Production observability, prompt versioning, drift monitoring, quarterly model upgrades (GPT-4o → GPT-5, Claude 3.5 → Claude 4), guardrail tuning and 24/7 SRE for your AI agent platform.

AI agents are the right fit when workflows — IT tickets, customer support, sales lead qualification, contract review, claims triage, AP processing, HR onboarding — are too complex for RPA but too repetitive for senior staff.

A well-built AI agent platform delivers measurable ROI within 90 days. Across DreamzTech’s 100+ deployments, customers see 50–80% reduction in manual ticket handling, 3–5× lift in lead-qualification throughput, 60–75% faster contract review cycles, 24/7 always-on customer support, and consistent six-figure annual cost savings per deployed agent — with full audit trails, RBAC and human-in-the-loop guardrails on every high-risk action.

Every production AI agent we build follows a six-layer reference architecture covering perception, reasoning, memory, action, guardrails and observability — the blueprint that lets agents scale from one task to enterprise-wide automation.

Agents ingest context from chat, email, voice, APIs, documents, CRM events, ERP webhooks and tool-output streams via event-driven listeners.

Foundation-model LLM (GPT-4o, Claude 3.5 Sonnet, Llama 3.3, Gemini 2.0) plans, decomposes goals, reflects and routes between specialist agents.

Short-term scratchpad, long-term vector memory (Pinecone, Weaviate, OpenSearch, pgvector) and episodic memory across sessions for stateful agents.

Tool-use, function calling and Model Context Protocol — agents invoke Salesforce, ServiceNow, SAP, REST APIs, databases and internal services.

Input/output filters, hallucination detection, PII redaction, jailbreak defense and human-in-the-loop escalation for high-risk agent actions.

LangSmith / Langfuse / Arize tracing, cost monitoring, prompt versioning, drift detection and accuracy dashboards end-to-end.

Buyers often compare AI agents with RPA, chatbots and copilots. This section makes the distinction crisp so you choose the right tool for each workflow.

| Capability | RPA Bot | Chatbot | Copilot | AI Agent |

|---|---|---|---|---|

| Reasoning | None — scripted rules | Pattern-matched responses | LLM-assisted in-context | Multi-step LLM reasoning, planning, reflection |

| Tool Use | UI automation only | Limited / none | Within host app | REST, GraphQL, MCP — full enterprise stack |

| Memory | Stateless scripts | Session-only | Per-conversation | Short-term + vector long-term + episodic memory |

| Autonomy | Triggered, deterministic | Response-only | Suggests, human accepts | Acts autonomously with guardrails & human-in-the-loop on high-risk |

| Adaptability | Brittle to UI changes | Limited to trained intents | Helps user adapt | Generalises to new cases via LLM reasoning & RAG |

| Best For | High-volume rule-based UI tasks | FAQ deflection, simple Q&A | Power-user augmentation in a host app | Multi-step cross-system workflows where rules vary case-to-case |

Our AI agent engineering depth spans 8 high-stakes industries — from healthcare prior-auth automation to BFSI fraud detection, legal contract intelligence and retail customer support.

HIPAA-eligible AI agents for prior-auth automation, clinical document Q&A, patient triage and physician copilots — Epic, Cerner and FHIR integration.

Multi-agent claims triage, FNOL automation, fraud detection and underwriting copilots — Guidewire, Duck Creek and ACORD integration.

M&A due diligence agents, clause extraction, contract review and compliance copilots — iManage, NetDocuments and CLM integration.

AP automation, KYC/AML, lending-decision copilots and customer-service agents — SAP, Oracle and Microsoft Dynamics 365 integration.

AWS GovCloud, Azure Government and Google Cloud Public Sector deployments; FedRAMP-aligned agents for permits, benefits and FOIA workflows.

Multi-agent customer service, product recommendation, inventory triage and supplier-comms copilots — Shopify, Magento and SAP Commerce.

Shop-floor copilots, predictive-maintenance triage, supplier-doc QA and 21 CFR Part 11 audit trails — SAP, Oracle and MES integration.

Onboarding copilots, employee self-service, policy Q&A and recruiter assistants — Workday, BambooHR and SuccessFactors integration.

Three tightly-scoped AI agent build paths, one delivery team. Pick custom LLM agents for single-domain automation, multi-agent systems for cross-functional orchestration, or AI workflow automation for cross-system business processes — same case studies, same SLAs, different agent topology.

Bring your toughest agent use case — IT ticket deflection, sales lead qualification, contract review, claims triage — and a senior AI agent architect will walk you through the recommended pattern (LangGraph vs CrewAI vs AutoGen), an accuracy benchmark on representative data and a fixed-scope budget range. Live, on the call. Free, 30 minutes, no obligation.

AWS Partner, Google Cloud Partner and Microsoft Solutions Partner. AWS ML Specialty, Azure AI Engineer and Google ML Engineer certified team. 100+ AI agent deployments across healthcare, BFSI, legal, retail and the public sector — built in 15 countries since 2012.

Tell us about your agent use case, target workflow and the systems you need to integrate. A senior AI agent architect will reply within one business day with a reference-architecture sketch (LangGraph / CrewAI / AutoGen), a fixed-scope estimate and recommended next steps. No sales pitch, no obligation — just an expert response from an AWS / Microsoft / Google Cloud Partner who has shipped AI agents for Fortune 500 enterprises.

Explore how DreamzTech has built production AI agents and multi-agent systems on LangGraph, AutoGen, CrewAI, Amazon Bedrock and Azure OpenAI that reduce ticket handle time, lift lead conversion and automate document workflows for Fortune 500 enterprises and high-growth mid-market.

A Fortune 500 enterprise SaaS company replaced 60% of its tier-1 support burden with a DreamzTech-built multi-agent customer support system. Powered by LangGraph orchestration, Anthropic Claude 3.5 Sonnet for reasoning, Amazon Bedrock Knowledge Bases for product-doc RAG and Salesforce Service Cloud integration. Result: 75% tier-1 deflection, 42% FCR lift, $2.1M annual cost saved within 6 months — with full audit trails, PII redaction and human-escalation guardrails.

A global retail bank automated its IT service desk with a DreamzTech-built multi-agent ITSM platform — LangGraph orchestration plus OpenAI GPT-4o and AutoGen specialist agents for password reset, VPN, MFA and Microsoft 365 issues. Native ServiceNow integration with bi-directional ticket sync, audit logs and RBAC. Year 1: 68% L1 auto-resolution, 73% faster resolution, $1.8M saved across 18,000 monthly tickets.

A high-growth B2B SaaS company replaced manual lead qualification with a DreamzTech-built multi-agent sales system — CrewAI orchestration plus Anthropic Claude 3.5 Sonnet researcher agents, Apollo, ZoomInfo and 6sense intent-data enrichment and native Salesforce + HubSpot sync. Year 1: 4.2× SQL conversion lift, $14.2M new pipeline generated, 67% SDR productivity gain with audit logs and human-review checkpoints on every outbound message.

DreamzTech Solutions is an AWS Partner, Google Cloud Partner and Microsoft Solutions Partner with engineers holding AWS ML Specialty, Azure AI Engineer, Google ML Engineer and security certifications — plus 100+ production AI agent deployments across 15 countries since 2012.

A structured, transparent four-phase process designed for regulated, enterprise-grade AI agent delivery — from agent use-case discovery to production hand-off and ongoing optimization.

We study your workflows, ticket volumes, conversation logs and integration requirements; benchmark candidate agent patterns against your accuracy and ROI targets; run governance / compliance scoping under NIST AI RMF; and lock down scope with named success metrics.

Senior AI agent architects design the planner / executor topology, model routing strategy, tool inventory, function-call schemas, vector-memory layer and guardrails — on AWS, Azure or Google Cloud under each cloud's Well-Architected Framework.

We build agents on LangGraph / CrewAI / AutoGen, run automated and human-graded evals against your ground-truth dataset, fine-tune prompts and guardrails, and iteratively benchmark accuracy and cost against your team's manual baseline.

We build the full agent-fronted application — chat / portal / API interface, exception-handling, approval routing, observability dashboards (LangSmith / Langfuse / Arize) — and hand off with documentation, SRE runbook and SLA tier.

AWS Partner, Google Cloud Partner and Microsoft Solutions Partner-grade AI agent platform — foundation-model LLMs, agent orchestration, vector memory, guardrails and human-in-the-loop review. Production-ready in 4–14 weeks.

Every AI agent we build is wrapped in input-side and output-side guardrails — prompt-injection detection, jailbreak defense, PII redaction, profanity / toxicity filters and constitutional AI rules tailored to your industry. Anthropic Claude’s constitutional layer, Azure AI Content Safety, AWS Bedrock Guardrails and OpenAI moderation are layered to prevent unsafe agent actions before they reach your customers or systems.

Granular RBAC limits which actions each agent can take and which users can invoke which agents — backed by enterprise SSO (Okta, Azure AD, Google Workspace, Ping Identity). Every agent invocation, tool call, model response and human approval is logged with immutable audit trails for SOX, 21 CFR Part 11, HIPAA and GDPR auditability.

Our AI agent platforms are deployed on SOC 2 Type II-attested cloud infrastructure (AWS, Azure, Google Cloud) with ISO 27001 / 27018-aligned information-security management. HIPAA BAAs are signed across all HIPAA-eligible cloud services. Annual third-party penetration testing, cloud-native vulnerability scanning, and a secure-SDLC under each cloud’s Well-Architected Framework provide defence-in-depth.

Every production agent ships with NIST AI Risk Management Framework documentation — system cards, model cards, intended-use, prohibited-use, evaluation results and a continuous-monitoring plan. For EU deployments we provide EU AI Act conformity assessment for limited-risk and high-risk agent classifications.

Automatic detection and blocking of hallucinated tool calls, prompt-injection attempts in inbound chat / email / documents, and Data Loss Prevention rules that prevent agents from exfiltrating PII or PHI to public LLM endpoints. Critical safeguards for agents that touch customer data — legacy RPA and chatbot tools do not address these threats.

Deploy on your own cloud tenant with private OpenAI on Azure, Anthropic Claude on Amazon Bedrock, or self-hosted open-source LLMs (Llama 3.3, Mistral, Qwen) — so agent reasoning never leaves your security perimeter. Zero data retention agreements with all model vendors. Full offline / air-gapped deployment available for defense, intelligence and regulated finance clients.

Information security

BAA across all major clouds

Responsible-AI documentation

Annual audit certified

Conformity assessment

ADA-accessible agent UI

Built on the AWS / Azure / Google Cloud Well-Architected Frameworks — Reliability, Security, Cost Optimization, Operational Excellence and Performance Efficiency reviewed at every milestone.

Real feedback from CTOs, VPs of Customer Service, and Heads of Revenue Operations running production AI agents built by DreamzTech on LangGraph, CrewAI and Amazon Bedrock.

Every custom AI agent project at DreamzTech is built on a tightly-integrated, production-grade stack. LangGraph and AutoGen handle multi-agent orchestration and planner/executor topology; CrewAI structures role-based agent crews; LlamaIndex powers agentic RAG over your domain corpus; and Anthropic Claude, OpenAI GPT-4o, Llama 3.3 and Amazon Titan handle the reasoning layer — with Model Context Protocol bridging your enterprise tools.

Behind the agent layer: AWS Lambda / Azure Functions / Cloud Run for serverless tool execution, Amazon Bedrock / Azure OpenAI / GCP Vertex for private LLM hosting, Pinecone / Weaviate / OpenSearch for vector memory, and LangSmith / Langfuse / Arize for observability — all running inside your cloud tenant, your VPC and your KMS customer-managed keys.

Choose the engagement model that fits your AI agent build — from senior-led dedicated teams to fixed-price MVPs and flexible time-and-materials.

A full-time team of AI agent engineers, prompt engineers, QA specialists and SRE — typically 3 to 8 engineers — embedded into your delivery cadence for 6–18 months of agent build, integration and operations.

Ideal for well-defined AI agent use cases — IT ticket deflection, sales lead qualification, contract review or claims triage — delivered as a fixed-scope, fixed-price MVP in 4–12 weeks on LangGraph / CrewAI / AutoGen.

Quickly add senior AI agent engineers, prompt engineers and LLM-ops specialists to your in-house team — fully managed by DreamzTech but reporting into your tech leadership. 1–3 month minimum, scale up or down monthly.

Maximum flexibility for evolving AI agent requirements — exploratory builds, agent-pattern R&D, prompt-engineering sprints and integration spikes. Pay only for time used; transparent monthly invoicing with senior-engineer day rates.

Multi-agent orchestration (LangGraph, CrewAI, AutoGen), foundation-model LLMs (GPT-4o, Claude 3.5 Sonnet, Llama 3.3), vector memory (Pinecone, Weaviate, OpenSearch), Model Context Protocol and Salesforce / ServiceNow / SAP integration — assembled into a production AI agent platform in 4–12 weeks.

Three real options exist for AI agent development: license a SaaS agent platform (Sierra, Decagon, Cognigy, Forethought, Moveworks), call hyperscaler agent APIs directly (Amazon Bedrock Agents, Azure AI Agents, OpenAI Assistants, Google ADK), or commission custom AI agent development. Each is right for different problems. Here is the honest comparison.

| Capability | Hyperscaler Agent APIs (Bedrock Agents / Azure AI Agents / OpenAI Assistants) | SaaS AI Agent Platforms (Sierra / Decagon / Cognigy / Moveworks) | DreamzTech Custom AI Agents |

|---|---|---|---|

| Agent Architecture | Vendor-locked agent runtime | Pre-built vertical agents, limited config | LangGraph / CrewAI / AutoGen with custom planner-executor and multi-agent topology |

| LLM Choice & Routing | Single vendor (OpenAI / Anthropic / AWS-only) | Vendor-managed model selection | GPT-4o, Claude 3.5/4, Llama 3.3, Gemini 2.0, Titan with intelligent cost-based routing |

| CRM / ERP Integration | Build connectors yourself | Limited to vendor’s pre-built connectors | Native Salesforce, ServiceNow, SAP, Dynamics 365, Workday, NetSuite, HubSpot integration |

| Guardrails & Governance | Basic moderation, no NIST AI RMF docs | Vendor-defined, opaque to enterprise audit | Constitutional AI guardrails, NIST AI RMF documentation, EU AI Act conformity, audit logs |

| Source Code & IP | Vendor-hosted, no source access | SaaS lock-in, monthly per-seat fees | You own the agent code, prompts, evals and infrastructure |

| Data Residency | Hyperscaler region, zero-retention contract | Vendor data center, multi-tenant | Your VPC, your KMS keys, on-premise / air-gapped option available |

| Best For | Simple single-domain agents, fast PoCs | Standard vertical use cases with light customisation | Enterprise multi-agent systems, deep CRM/ERP integration, regulated industries |

When DreamzTech is the right call: custom domains (legal contracts, healthcare prior-auth, regulated banking) where off-the-shelf SaaS plays trade flexibility for speed; high CRM/ERP integration depth that hyperscaler APIs do not cover; or multi-agent orchestration patterns (planner-executor, role-based crews) that need expert engineering. Choosing between AWS Bedrock Agents, Azure AI Agents and OpenAI Assistants for your foundation? AWS Bedrock leads on multi-model flexibility (Claude, Llama, Titan) and AWS-service integration. Azure AI Agents has the deepest Microsoft 365 / Dynamics tooling. OpenAI Assistants offers the smoothest function-calling DX. DreamzTech builds on whichever fits — and helps you make the trade-off call up front.

Common questions from CIOs, CTOs and AI leaders evaluating AI agent development companies for enterprise deployment.

An AI agent is a software system built on a foundation-model LLM (GPT-4o, Claude 3.5 Sonnet, Llama 3.3) that perceives context, reasons over goals, calls external tools and APIs, and takes actions on behalf of a user — with memory and continuous learning. A chatbot answers questions in conversation; an AI agent autonomously executes multi-step workflows across systems. DreamzTech’s AI agents reset passwords in ServiceNow, qualify leads in Salesforce, review contracts in iManage and process invoices in SAP — without human handoff for in-scope tasks.

An AI agent development company designs, builds, integrates and operates custom AI agents and multi-agent AI systems for enterprises. DreamzTech offers six service tracks — strategy and agent architecture, custom LLM agent development, multi-agent system development, AI workflow automation, CRM/ERP integration and managed AI agent services — composed into production-ready agent platforms in 4 to 14 weeks on AWS, Azure or Google Cloud.

DreamzTech builds five primary agent types — (1) tool-using single-task agents for narrow automations, (2) ReAct agents that interleave reasoning and tool calls, (3) planner-executor agents with explicit goal decomposition, (4) multi-agent systems with role-based crews (researcher, writer, reviewer), and (5) hierarchical agents with supervisor-worker patterns. Selection depends on workflow complexity, latency budget, accuracy target and integration depth.

We build on OpenAI (GPT-4o, GPT-5, o1), Anthropic Claude (3.5 Sonnet, 4), Meta Llama 3.1 / 3.3, Google Gemini 2.0, Amazon Titan and Mistral. Orchestration: LangChain, LangGraph, AutoGen, CrewAI, LlamaIndex, Semantic Kernel. Hosted via Amazon Bedrock, Azure OpenAI, GCP Vertex AI or self-hosted on Kubernetes / EKS. Selection per use case based on accuracy, cost, latency, governance and data-residency constraints.

We build native integrations with Salesforce, HubSpot, Microsoft Dynamics 365, SAP, Oracle, NetSuite, Workday, ServiceNow and 50+ enterprise systems via REST, GraphQL, SOAP, webhook patterns and Anthropic’s Model Context Protocol (MCP). Agents authenticate with enterprise SSO (Okta, Azure AD), respect existing RBAC, log every action to immutable audit trails, and support both human-in-the-loop and fully-autonomous execution depending on action risk.

A focused tool-using agent MVP (single workflow, 2–3 tool integrations) ships in 4–6 weeks. A multi-agent system (3–5 specialised agents, 5–10 integrations, RAG, observability) ships in 8–14 weeks. Enterprise-wide agent platform with multiple crews, fine-tuning, compliance gates and 24/7 SRE — 14–22 weeks. All timelines include design, build, evals, integration, security review and production cutover with stage gates at each phase.

A focused single-task AI agent MVP starts at $25,000–$45,000 (LangChain, OpenAI / Claude API, 2–3 tool integrations, 4–6 weeks). A production multi-agent system runs $75,000–$200,000 (LangGraph or CrewAI, multiple specialist agents, vector memory, RAG, observability, 5–10 integrations, 8–14 weeks). Enterprise agent platforms with fine-tuning, multi-region deployment, FedRAMP / HIPAA controls and 24/7 SRE run $200,000–$400,000+.

RPA executes deterministic UI scripts (no reasoning, brittle to UI changes). Chatbots answer questions in dialog (no tool use, no actions). Copilots assist a human inside a host app (e.g. GitHub Copilot). AI agents reason, plan, call tools and act autonomously across systems. RPA suits high-volume rule-based work; chatbots suit FAQ deflection; copilots suit power-user augmentation; AI agents suit multi-step cross-system workflows where rules vary case-to-case. We help you choose the right tool per workflow.

Every agent is wrapped in input-side and output-side guardrails — prompt-injection detection, PII redaction, jailbreak defense, function-call validation and human-in-the-loop on high-risk actions. Infrastructure is SOC 2 Type II, ISO 27001 / 27018 attested with HIPAA BAAs across AWS, Azure and Google Cloud. Every agent invocation, tool call and LLM response is logged with immutable audit trails for SOX, HIPAA, GDPR and EU AI Act compliance. Private LLM deployment available for regulated finance, defense and healthcare.

Eight primary industries — Healthcare (HIPAA-eligible prior-auth, clinical Q&A, FHIR-integrated copilots), BFSI (KYC/AML, AP automation, lending copilots), Legal (M&A due-diligence, clause extraction, CLM agents), Insurance (claims triage, fraud detection, underwriting), Retail (customer service, recommendation, inventory), Manufacturing (shop-floor copilots, supplier-doc QA), Public Sector (FedRAMP / GovCloud / IL5 agents) and HR/Talent (onboarding, employee self-service, recruiter copilots).

A multi-agent system uses multiple specialised LLM agents that communicate, share memory and coordinate to solve complex workflows. Common patterns: planner-executor (one agent decomposes, another executes), role-based crew (researcher, writer, reviewer agents), and hierarchical (supervisor delegates to workers). You need a multi-agent system when no single prompt and tool-set can reliably handle the task — e.g., M&A due diligence across 50 documents needs a research-summarise-cross-check pipeline that a single agent struggles with.

Managed AI Agent Services cover 24/7 production observability (LangSmith, Langfuse, Arize), prompt versioning and A/B testing, drift and hallucination monitoring, quarterly model upgrades (e.g., GPT-4o → GPT-5, Claude 3.5 → Claude 4), guardrail tuning, evaluation against new ground-truth data, SLA-backed incident response and capacity / cost optimization. Three tiers — Bronze (business hours), Silver (extended), Gold (24/7 with named SRE).

Multi-layered defense: (1) guardrails block obvious hallucinations at output time; (2) function-call validation rejects malformed tool invocations; (3) confidence scoring routes low-confidence outputs to human review; (4) grounded RAG forces answers from your vetted corpus; (5) constitutional rules enforce industry-specific constraints (no medical advice, no legal opinion, etc.); (6) observability dashboards flag drift and accuracy regressions. For high-stakes actions ($, PHI, legal binding), human-in-the-loop approval is mandatory.

Yes. Three approaches: (1) Use Azure OpenAI, Amazon Bedrock or GCP Vertex with zero-retention contracts — data never trains the public model. (2) Self-host open-source LLMs (Llama 3.3, Mistral, Qwen) on your own Kubernetes / EKS — fully air-gappable. (3) Use Anthropic’s enterprise Claude with no-training agreement. For all three: data stays in your VPC, encrypted at rest with your KMS keys, with DLP and PII redaction on inbound and outbound flows.

Zapier / Make / Power Automate handle rule-based “if X then Y” triggers between SaaS apps — deterministic, brittle to edge cases, no reasoning. AI agents handle workflows where rules vary case-to-case, where data extraction or summarisation is needed, or where the next step depends on judgment. Best practice: use Zapier / Make for simple deterministic glue and AI agents for the reasoning-required steps — combined, they cover 90% of business workflow automation.

We build an evaluation harness for every agent — ground-truth dataset (50–500 labelled examples), automated eval pipeline (LangSmith / Promptfoo / Braintrust), human-grading rubrics for subjective outputs, and continuous shadow-mode testing in production. Accuracy improves via prompt iteration, few-shot examples, fine-tuning, better RAG retrieval, model swapping (sometimes Claude 3.5 beats GPT-4o for a specific task), or breaking one agent into multiple specialised agents.

LangChain is the foundational toolkit — chains, agents, retrievers, integrations. LangGraph (by LangChain Inc) adds explicit state machines for complex multi-step agents with cycles. AutoGen (by Microsoft) excels at conversational multi-agent systems where agents debate and refine outputs. CrewAI is opinionated for role-based crews — define roles, assign tasks, watch the crew execute. We use LangGraph for stateful workflows, CrewAI for role-based pipelines, AutoGen for agent-to-agent debate patterns. Often combined.

Most enterprises see 50–75% deflection of tier-1 tickets after a 4-week pilot, with the highest-resolution tickets (and emotionally sensitive ones) still escalated to human agents. Agents excel at password resets, order status, simple troubleshooting and product Q&A. Humans excel at complaint handling, complex multi-issue tickets and brand-relationship work. The right model is hybrid — agents handle 50–75% volume at a fraction of cost, humans focus on high-value cases.

Native integration via Salesforce REST / GraphQL APIs, Tooling API and Apex for custom logic; ServiceNow Table API, Scripted REST and MID Server for on-premise data. Agents authenticate via OAuth 2.0 with named credentials, respect record-level RBAC, support Field Service Lightning and Service Cloud Voice in Salesforce, and ITSM, ITOM, HRSD, CSM and SecOps in ServiceNow. All actions logged to native audit tables.

All three are good starting points for simple agents — but for production, custom agent orchestration on LangGraph / CrewAI / AutoGen gives more control. OpenAI Assistants: smoothest function-calling DX, but locked to OpenAI models. Amazon Bedrock Agents: best multi-model flexibility (Claude, Llama, Titan) and AWS-service depth. Azure AI Agents: deepest Microsoft 365 / Dynamics / Power Platform integration. DreamzTech evaluates per use case and recommends — sometimes hyperscaler-native, sometimes custom.

RAG (retrieval-augmented generation) lets agents look up facts from your document corpus at inference time — fast to update, cheap, no model training needed. Fine-tuning bakes patterns into the model weights — better for stylistic consistency, complex reasoning patterns or efficiency at scale. Best practice: RAG for facts and references, fine-tuning for tone and reasoning patterns. We help you choose and combine. Most enterprise agents use RAG; only ~20% need fine-tuning.

Yes. We build voice-enabled agents using OpenAI Realtime API, Anthropic Claude on Amazon Bedrock with voice gateways, and Azure AI Speech. Multimodal vision is supported on Claude 3.5 Sonnet, GPT-4o and Gemini 2.0 — agents that read photos, screenshots, PDFs and video frames. Common use cases: voice IVR replacement, vision-based claims processing, AR-based field service copilots, multimodal customer support.

MCP is Anthropic’s open standard for connecting AI agents to external tools, data sources and services — a “USB-C for AI tools.” It standardises how agents discover and call tools across providers (OpenAI, Anthropic, Google) without custom adapters per LLM. DreamzTech is an early MCP adopter — every new agent we build exposes its tools as MCP servers, so your agents are portable across foundation-model providers and your tools work across multiple agents.

Book a free 30-minute architect call. Bring your toughest workflow — IT ticket deflection, sales lead qualification, contract review, claims triage — and a senior AI agent architect will walk you through the recommended pattern (LangGraph vs CrewAI vs AutoGen vs hyperscaler-native), an accuracy benchmark on representative data, and a fixed-scope budget range. No sales pitch. No obligation. Then we send a written proposal within 1 business day with a reference architecture, scope and engagement model.